Can a Computer Have a Mind?

The question of whether a mechanical device could ever be said to think–to experience feelings, or to have a mind–is not really new one. But it has been given a new impetus, even an urgency, by the advent of modern computer technology.

The question touches upon deep issues of philosophy. What does it mean to think or to feel? What is a mind? Does mind really exist? Assuming that they do, to what extent are minds functionally dependent upon the physical structures with with they are associated? Might mind be able to exist quite independently of such structures? Or are they simply the functionings of physical structures? In any case, is it necessary that the relevant structures be biological in nature (brains), or might mind be equally well be associated with pieces of electronic equipments? These are among the issues, I shall be attempting to address in this article.

Consider a hypothetical case in which a new model of computer has come on the market, with a size of memory store and numbers of arithmetic and logical units in excess of those in a human brain. Suppose also that these computers have been carefully programmed and fed with a great quantities of data of an appropriate kind. The manufacturers are claiming that the devices actually think. They are also claiming them to be genuinely intelligent.

Or they may go further and make the suggestion that the devices actually feel – pleasure, pain, compassion, pride, etc. – and that they are aware of, and actually understand what they are doing. Indeed, the claim seems to be being made that they are conscious.

How are we to tell whether or not the manufacturers’ claims are to be believed? Ordinarily, when we purchase a piece of machinery, we judge its worth soleny according to the service it provides us. If it satisfactorily performs the task we set it, then we all well pleased. To test the manufacturers’ claim that such a device actually has the asserted human attributes we would, according to this criterion, simply ask that it behaves as a human being would in these respects. In other words, we ask the computer to produce human-like answers to any question that we may care to put to it and if it answers our questions in a way indistinquishable from human being, then the claims that it indeed thinks (or feels, understans, etc.

) is satisfied.

To verify this claim, the computer together with some human volunteer, are both to be hidden from the view of some (perceptive) interrogator. The interrogator has to try to decide which of the two is the computer and which is the human being merely by putting probing questions to each of them. These questions, but more importantly the answers that the interrogator receives, are all transmitted in a impersonal fashion, say typed on a keyboard and displayed on a screen. The interrogator is allowed no information about either party other than that obtained merely from this question and answer session. The human subject answers the questions truthfully and tries to persuade the interrogator that he is indeed the human being and the other subject is the computer which has been programmed to ‘lie’ so as to try to convince the interrogator that it, indeed, is the human being. If in the course of a series of such tests the interrogator in unable to identify the real human subject in any consistent way, then the computer is deemed to have passed the test.

Now, it might be argued that this test is actually quite unfair on the computer. For if the roles were reversed so that the human subject instead were being asked to pretend to be a computer and the computer instead to answer truthfully, then it would be only too easy for the interrogator to find out which is which. All that he would need to do would be to ask the subject to perform some very complicated arithmetic calculation. A good computer should be able to answer accurately at once, but a human would be easily stumped. (One might have to be a little careful about this, however. There are human ‘calculating prodigies’ who can perform very remarkable feats of mental arithmetic with unfailing accuracy and apparent effortlessness. For example, Tathagat Avtar Tulsi, a PhD student in the department of physics, Indian Institute of Science, is able to multiply any two random number in less than a minute.

Now, it might be argued that this test is actually quite unfair on the computer. For if the roles were reversed so that the human subject instead were being asked to pretend to be a computer and the computer instead to answer truthfully, then it would be only too easy for the interrogator to find out which is which. All that he would need to do would be to ask the subject to perform some very complicated arithmetic calculation. A good computer should be able to answer accurately at once, but a human would be easily stumped. (One might have to be a little careful about this, however. There are human ‘calculating prodigies’ who can perform very remarkable feats of mental arithmetic with unfailing accuracy and apparent effortlessness. For example, Tathagat Avtar Tulsi, a PhD student in the department of physics, Indian Institute of Science, is able to multiply any two random number in less than a minute.

)

Thus, part of the task for the computer’s programmers is to make the computer appear to be ‘stupider’ than it actually is in certain respects. For if the interrogator were to ask the computer a complicated arithmetical question then the computer must now have to pretend not to be able to answer it, and though this may give an eerie impression that the computer has some understanding, infact it has none, and is merely following some simple mechanical rules. The task of making the computer ‘stupider’ in this way is not a serious problem facing the computer’s programmers. The main difficulty is to make it answer some of the simplest ‘common sense’ type of question – questions that the human subject would have no difficulty with whatever!

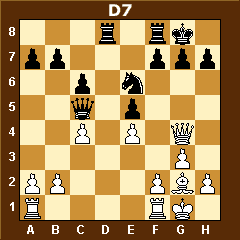

Chess-playing computers probably provide the best examples of blunders made by the computer on some of the simplest ‘common sense’ type of move, which human subject will never make. In the figure below, which is supposed to be a chess board, “OO” stands for empty squares on the board, “BK” is Black King, “BR” is Black Rook, “BB” is Black Bishop, “BP” is Black Pawn, (All the queens and knights are gone) and then all the ones with “W” are the white pieces, which are all pawns and one king.

OO OO OO OO OO OO BK BR

OO OO OO OO OO BB OO BP

OO OO BP OO OO OO BP WP

BR BP WP BP OO BP WP OO

BP WP OO WP BP WP OO OO

WP OO OO OO WP OO OO OO

OO WK OO OO OO OO OO OO

OO OO OO OO OO OO OO OO

The situation here is that the white pawns form an impenetrable barrier for their king, boxing in all the black pieces so that can’t make any moves to take any of the white pieces. Even though the black side has more powerful pieces left (two rooks and a bishop), there is no way for them to get through the white pawns and place the white king in checkmate — they cannot take any of the white pawns — and the white king is safe as long as he remains behind the pawns, moving around freely. (Well, yes, it is a stalemate, but that is better than utter defeat.) But the Deep Thought computer***, playing the white side, did not grasp this. Instead, it took the black rook, thus opening up the barrier of pawns and it was all lost from there.

It is worth remarking that chess-playing machines fare better on the whole, relative to the comparable human player, when it’s required that the moves are made very quickly; the human players perform relatively better in relation to the machines when a good measure of time is allowed for each move (Please refer to the earlier article entitled “Are Computer Games Really Conscious” for detailed mathematical understanding). This is because of the fact that the computer’s decisions are made on the basis of precise and rapid extended computations, whereas the human player takes advantage of ‘judgements’, that they rely upon comparatively slow conscious assessments. The human judgements serve to cut down drastically the number of serious possibilities that need be considered at each stage of calculation, and much greater depth can be achieved in the analysis, when the time is available, than in the machines simply calculating and directly eliminating possibilities, without using such judgements.

The essential point here is that the quality of human judgement and understanding, which springs from consciousness, is an essential thing that the computer lack.

O son of Bharata, as the sun alone illuminates all this universe, so does the soul, one within the body, illuminate the entire body by consciousness – Lord Krsna [Bg 13.34]

***Programmed by Hsiung Hsu, of Carnegie Mellon University, which has a rating of about 2500 Elo, and recently achieved the remarkable feat of sharing first prize (with Grandmaster Tony Miles) in a chess tournament (in Longbeach, California, November 1988), actually defeating a Grandmaster (Bent Larsen) for the first time!

Please research artificial intelligence, how deep learning, machine learning, and neural networks works. I mean not to criticise the author of this post but it contains a lot of grammatical errors, and logical errors in the field of artificial intelligence.

There’s a difference between a logic based program and a program based on neural networks. I myself work on the field of artificial intelligence and neural networks are based on the neurons of the brain of a human being. Its like how a child grows and learns through experiences. Neural networks learn the same way through data sets which are experience for a human child and this is called machine learning. We don’t have to code these experience but the neural network itself learns through these data sets and makes its own decisions.

Please research this topic to learn more about neural networks link- https://en.wikipedia.org/wiki/Generative_adversarial_network

Consciousness is a kind of information processing which is done in the presence of an external stimuli with sensory input and output organs.

A brain when removed from a body does not has thoughts and is alive when supplied with blood because it has no external stimuli or sensory input or output device to operate with.

Self driving cars that work on ai dont need to be programmed they start by driving a car themselves. They learn as a kid at first they dont even know how to move a car but in a matter of time they learn through a data set made by input cameras and output electrical signals.

This video will explain you how a neural network learns to play mario as a child without any code written to play mario. https://www.youtube.com/watch?v=qv6UVOQ0F44&t=14s

The article is pretty outdated as instead of talking about artificial intelligence and neural networks its talking about logical computer programs.

Please take a look at aplha go a neural network that plays go a game which isnt logic based but intuition based which needs actual thinking to play it.

Please take a look at this video on how we copied the neurons from the brain of a simple worm and put it in a robot and the robot started acting like a worm we didn’t wrote any code to do so. But when we copied the neural code from the brain of the worm in a c algorithm and provided it with a sensory input and output device it started acting like a worm. I actually worked for this project. What does this means? https://www.youtube.com/watch?v=YWQnzylhgHc

This is a video in which we alter and mimic the neurological electric signal send by the brain and mimic or change them to do various things. https://www.youtube.com/watch?v=rSQNi5sAwuc and https://www.youtube.com/watch?v=pvBlSFVmoaw

Our DNA is made up of 4 protien adenine thymine guanine cytosine. When we have a disease our DNA updates its code when we learn things etc it is called learning from experiences. The same way artificial intelligence neurons learn from data sets too and it is called machine learning.

You are speaking all rubbish.

The brain has nothing whatsoever to do with intelligence. The mind and the intelligence are not in the brain. So you can not create artificial intelligence by mimicking the brain.

Intelligence and mind are on the subtle platform, something that science is yet to discover. So it is like a child seeing the cars passing by on the road and thinking the cars are driving themselves. No. The cars are being driven by the driver. Similarly the brain is a machine, a way the soul interfaces with the senses of the material body and thus perceives the material world.

But the brain does not think, feel or will. That is done by the mind and the intelligence makes the decision to either accept or reject the suggestions of the mind. Brain can not do anything itself, it is a machine that has to be working by the soul.

Learning is not in the DNA, learning is in the soul. Everything you write here is just childish insane rubbish.

I was very interested by the philosophical implications of this article, but I feel that although you introduced the topic very well through the discussions of the Turing test and algorithmic thinking, you failed to answer the question posed at the beginning.

You asked “can a computer have a mind?” and ultimately concluded the article with this:

“The essential point here is that the quality of human judgement and understanding, which springs from consciousness, is an essential thing that the computer lack.”

Your definition of human judgment:

“the computer’s decisions are made on the basis of precise and rapid extended computations, whereas the human player takes advantage of ‘judgements’, that they rely upon comparatively slow conscious assessments”.

You didn’t go into any detail about the difference between a human judgment and a mechanical calculation, or why one is more “conscious” than the other. Is not a computer capable of some forms of judgment, and a human capable of some forms of calculation? Surely so. So why is one conscious and another unconscious?

Like I said, you introduced the topic in a way that was easy to understand and pretty interesting, but you failed to answer the question in the title. In fact, all of the questions in the first paragraph were exactly the questions I ask myself, but you concluded the article just short of answering a single one. I am disappointed because I was deeply interested in all of these questions.

I see a comment talking about the soul. That is yet another question on the stack to be answered: Is a soul required for consciousness? Is a soul different from a consciousness?

In my opinion, you have only started this article. If you finish, I will be very interested.

Hare Krishna Micah

It is not my article actually and I agree with you it is not really very completely explained.

The difference between a computer and a person is a computer is a machine designed by a person that runs a program written by a person whereas a person is a person who can design a machine, write a program and instruct the machine to run that program.

The person is a living entity. The person is a spirit soul. The missing point, that is not understood by the scientists, is that there are two types of energy, the material energy and the spiritual energy. The person is spiritual energy and he is completely distinct from the material energy. So because the person is spiritual he is an individual who has desires, who can think, feel and will, and who can dream and work to achieve his desires.

So it is the spirit soul who is the person. And spirit is totally different from matter. Spirit is not composed of nor can it be imitated by any combination of earth, water, fire, air and ether.

So this lack of understand about what intelligence is means it is totally impossible for the scientists to make artificial intelligence.

Of course you can make ‘artificial intelligence’, just like you can make artificial grass by shredding some green plastic and gluing it to some rubber mat… And if you stand far away enough from it it may look a bit like grass…

So the consciousness is the symptom of the presence of the spirit soul. Without the presence of the spirit soul there can be no consciousness. So machine does not, and will never have, a spirit soul. So a machine can and never will be conscious.

Of course to some extent our material body is a machine. But the material body is not conscious. It is not the material body that is working. It is the soul who is working the material body, just like the car is not moving itself. The car is moving under the direction of the driver who is conscious.

Chant Hare Krishna and be happy!

Madhudvisa dasa

Your point about spirituality makes sense. However, in regard to your analogy of artificial intelligence, I don’t think it’s true.

We can make artificial grass. It won’t grow or live like grass does unless we start with life. Scientists may be able to modify grass to make it grow larger or faster, but they can’t create it from anything besides grass.

Intelligence is different. We did not start from a brain to grow a mind. We started from sand and copper. And we currently have machines that in various ways are more intelligent than humans – be it in maths like you mentioned, or in their ability to organize things, or to predict, or to remember. Plastic and glue makes poor artificial grass, but I dare say that using the same materials, we have made minds more powerful than our own. Doesn’t that preclude the necessity of a driver?

Now, to defeat this argument, you could argue that we have done the same thing as with the grass: breeding better grass from natural grass, designing intelligence with intelligence that naturally occurs in humans. Fine. But the whole point of artificial intelligence as opposed to normal programming is that the machine is to progress to a point past its programming. It doesn’t remember the past sequences of chess plays and pick one. It learns the rules, attempts to model and predict, and makes a move. Artificial intelligence exists (cite machine learning) that ends knowing more at the end of its program than it knew from the beginning. In other words, the machine is smarter than when the human designed it and turned it on. That is intelligence. And that, I don’t think can be explained away by the need for a consciousness which is bound to a soul. So is there such thing as a consciousness without a soul?

Hare Krishna Micah

You have missed the main point. Consciousness is a symptom of the presence of the spirit soul. And intelligence is not a function of the mind, it is a function of the spirit soul.

This is a very important mistake that the AI scientists have made. They think intelligence is a function of the brain and they are trying to imitate the brain by constructing neural networks similar to the ones they find in the brain in a hope of creating intelligence. But the brain has nothing to do with consciousness or intelligence. It is a machine only, like a computer. It has some memory and can do some processing. But like the computer it is operated by an intelligent person. And that intelligent person is a spirit soul.

Computers can not learn, computers have no consciousness, computers have no awareness, all they can do is run a set of instructions written by a person.

‘Machine learning’ is much overrated. Artificial intelligence will never be any better than artificial grass. It may look a little like intelligence from a distance. That is all they will every be able to achieve.

Intelligence is a function of the spirit soul. And the spirit soul has nothing to do with matter and can not be imitated by matter.

Yes. You can make a computer that can do calculations fast, that can store a lot of information and search through the information. But it is all done by programs that are written by people and run by people. All the machine can do is execute instructions written by people when the people run the programs…

You are too young of course. But since the 1960’s, for more than 60 years now, the scientists have been announcing that they will have intelligent computers within a year or two. But they have never made any real progress on this. And it is because they do not understand that intelligence is not a function of the brain, it is a function of the spirit soul. So they can not create intelligence by trying to imitate the brain. They are completely in ignorance as to what intelligence is. So it is not possible for them to create artificial intelligence that is any better than artificial plastic grass…

Chant Hare Krishna and be happy!

Madhudvisa dasa

You can say that we are incapable of creating artificial intelligence, but we have, and it works. In ways, it’s smarter than conscious people, and independent of them. We can’t define them as conscious, sure, but they are most certainly intelligent and lacking a soul. You can’t possibly deny this in the face of what we have built?

Hare Krishna Micah

We have not built any artificial intelligence at all! We have written computer programs and those programs run on computers. That’s all. You can write a program that mimics human behavior, you can write a program that ‘learns’. But you can not write a program that is intelligent.

You claim we have created artificial intelligence. If so where is it? Please give me the proof, let me know where it is and I will test it and see if it is actually intelligent.

Of course we can create artificial grass by shredding green plastic and gluing it to a backing material and putting it on the ground. But apart from a slight similarity with real grass when viewed from a distance, our artificial grass is nothing like real grass…

So our artificial intelligence is no better than our artificial grass, at least at the current time.

Chant Hare Krishna and be happy!

Madhudvisa dasa

Hare Krishna Prabhu

AGTSP!

You said something that was bothering me:

“And intelligence is not a function of the mind, it is a function of the spirit soul.”

As i understand the philosophy of material elements accepted by vaishnav acaryas and prabhupada propounds that intelligence is a material element or more precisely a component of subtle body actually so it should have nothing to do with spirit soul at all, the spirit soul is sat cid anand and completely different in nature compared to any material elements whether it be subtle or gross elements.

Also AI is actually able to emulate the intelligence of a person very very well but again its just emulating and has no elements of mind and false ego for it to actually function on its own(not to mention a spirit soul), so it will be limited to very gross mundane tasks(that too after training it very much) the differences will be actually seen when AI has to do tasks which need to emulate all aspects of human in complete which would be impossible solely due to the fact that it will lack requires subtle elements in full, for example it shouldn’t be able to perform any system of yoga on its own or reach conclusions in such a manner, in the end its an emulation of certain aspect humans but due to poor fund of knowledge(about workings of material world) of these scientists they cannot actually understand this.

You are speaking rubbish. First you should know then speak. Intelligence is there in the soul, everything is there in the soul, not that the soul has no intelligence. What do you think the soul is if it has no intelligence? Nothing can be there without the soul. The subtle elements are coming from the soul in touch with matter. Yes. Material intelligence is there, but spiritual intelligence is there.

We have to understand mind is thinking feeling and willing, it is a subtle material element, but thinking feeling and willing also exist in the spirit soul, and intelligence is simply accepting and rejecting. So the task of intelligence is to evaluate and decide to either accept or reject the propositions put to it by the mind. That is why we have the idea of controlling the mind. The mind is controlled by the intelligence. Or, for a materialistic person, the intelligence is controlled by the mind.

So these very basic points you do not seem to understand at all.

Also you do not understand that they do not have any artificial intelligence. The don’t even know what intelligence is. So how can they hope to make artificial intelligence. They have to make an artificial mind first.. They have no idea how it works. Or even where it is. They are chopping up brains to try and find intelligence, but the mind and intelligence are not in the brain…

All they have is computers running code written by people. And of course you can do wonderful things with computers running code written by people. But all you have is computers running code written by people.

Chant Hare Krishna and be happy!

Madhudvisa dasa

AI can only imitate, it lacks the principles or motives of a human being this would be seen when AI is given a complex task which involves human life at a very complex level say running a city it cannot account for human motives or principles or the basic symptoms of spirit soul which are present even in the conditioned living beings.

Its just like demons who imitate vaishnavs they maybe proficient in all the scriptural memory wise but they lack the principle or motive to use it correctly; they misuse it similarly here the AI won’t misuse it rather it will lack it; AI cannot imitate a higher level of intelligence which is present in subtle terms and it also lacks a mind and false ego, most importantly spirit soul to have any motives so its just a limited emulation for gross activities.

You misunderstand. There is no AI, there are just computer programs running code written by people. So all computers can do is run code written by people. That code can imitate intelligent behavior. And if you like you can call this artificial intelligence, just like you can call shredded pieces of green plastic ‘artificial grass’… But a computer running code is no more like intelligence than green shredded plastic is like grass. Actually there is no artificial grass and no artificial intelligence…

You are just trying imitate a particular part of human consciousness that’s all, nothing new is being invented under the sun.

Your suggestion are verŷ valuable.I joy the letter to get some spirit for my mind

here is the answer) lets see this following conversation of Srila prabhupada :

But they are rascal. It is not the brain that is working. It is the spirit soul that is working. The same thing: the computer machine. The rascal will think that a computer machine is working. No. The man is working. He pushes the button, then it works. Otherwise, what is the value of this machine? You keep the machine for thousands of years, it will not work. When another man will come, put the button, then it will work. So who is working? The machine is working or the man is working? And the man is also another machine. And it is working due to the presence of Paramatma, God. Therefore, ultimately, God is working. A dead man cannot work. So how long a man remains living? So long the Paramatma is there, atma is there. Even the atma is there, if Paramatma does not give him intelligence, he cannot work. Mattah smrtir jnanam apohanam ca [Bg. 15.15]. God is giving me intelligence, “You put this button.” Then I put this button. So ultimately Krsna is working. Another, untrained man cannot come and work on it because there is no intelligence. And a particular man who is trained up, he can work. So these things are going on. Ultimately comes to Krsna. What you are researching, what you are talking, that is also Krsna is doing. Krsna is giving you in… You, you prayed for this facility to Krsna. Krsna is giving you. Sometimes you find accidentally the experiment is successful. So when Krsna sees that you are so much harassed in experimental, “All right do it.” Just like Yasoda Ma was trying to tie Krsna, but she could not do. But when Krsna agreed, it was possible. Similarly, this accident means Krsna helps you: “All right, you have worked so hard, take this result.” Everything is Krsna. Mattah smrtir jnanam apohanam ca [Bg. 15.15]. Everything is coming from Krsna.

{source : https://prabhupadavani.org/main/Walks/MW016.html }

__________________

now lets see another example of Srila Prabhupada :

The same example. Just like computer machine. you do not find that the machine is made by a brain which is different from this material. But you are trying to find out a brain from this. This is your childish thinking. The brain is different from machine. The machine is lump of iron. And the one who is working with the machine is a different from the machine. That you do not know. That you do not know. That is your defect. Now what is this computer machine will do unless there is a worker in the computer room, highly salaried man?

{Source : http://prabhupadabooks.com/classes/philosophy/syamasundara/charles_darwin?page=2 }

___________________

did you understand it now ? that what prabhupada says about computer ?